Terraform automation: Control center (Stepstone, DNS, NTP, AD, Etc.) and Terraform automation: NAS Server (CIFS, NFS, Etc.): Difference between pages

No edit summary |

No edit summary |

||

| Line 1: | Line 1: | ||

To provide storage services inside each lab environment I have created a FreeNAS server VM that will be pr-deployed and pre-configured so that it can be cloned into different lab environments. | |||

This is nothing new, as we are also doing this with other VM's within the nested virtual lab environment like with the vCenter Server, the Control Server and the Cumulus network infrastructure VM’s. | |||

== Installation == | |||

The first step is to get the .ISO file that I will need to do a fresh install of FreeNAS, | |||

I downloaded this [https://www.freenas.org/download-freenas-release/](https://www.freenas.org/download-freenas-release/ here]. | |||

I deployed a new virtual machine with the following specs/parameters: | |||

* Name = “nas-template” | |||

* | * Guest OS Family = "Other" | ||

* Guest OS Version = "FreeBSD 11 64-bit" | |||

* | * CPU = 4 | ||

* | * RAM = 8 GB | ||

* | * HD1 = 8 GB (used for OS install) | ||

* | * HD2 = 500 GB (used for storage) | ||

* | |||

* | |||

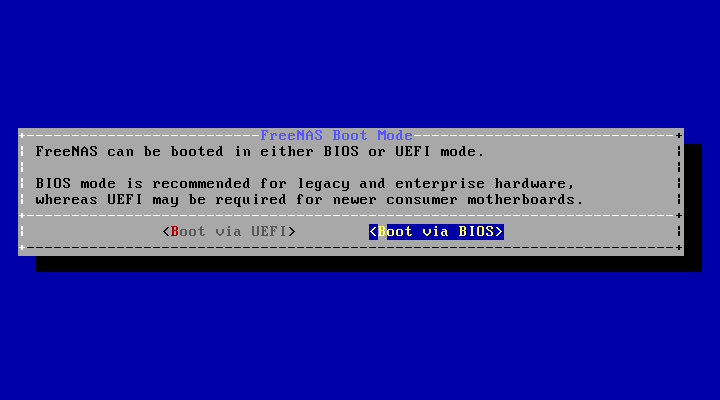

After the | During installation choose to boot from BIOS: | ||

[[File:installer-boot-mode.png]] | |||

You can log in with the username `root` and the password you provided during the installation wizard. | |||

After the initial installation and the first FreeNAS boot a console will pop up where you can configure network settings like IP address, gateway and DNS. | |||

This is called the “Network console". | |||

If you shut down the console (like I did not by intention) you can always start it with `/etc/netcli` . | |||

{{note|Full installation steps for FreeNAS can be found [https://www.ixsystems.com/documentation/freenas/11.3-U1/install.html here].}} | |||

Log in to the GUI after setting the IP address with `root` and the password that you provided during the initial installation. | |||

== Adding a new disk pool == | |||

In order to use storage space the first step in FreeNAS is to create a new “Disk” Pool: | |||

[[File:screenshot_1530.png|600px]] | |||

== Create share and enable NFS == | |||

When we have a disk pool we can create a share and enable a filesharing service, in my case NFS. | |||

Create a Share and enable the NFS service: | |||

[[File:screenshot_1531.png|600px]] | |||

At this point you would say that it is good to configure the new NFS Datastore directly into the vCenter Server. | |||

This is not possible yet, as we first need to configure the Datacenter, Hosts and Clusters. | |||

This is something we will do in a later script. | |||

Now that the initial NAS is finished we can start to create the terraform script for cloning. | |||

{{console|body= | {{console|body= | ||

| Line 68: | Line 58: | ||

'''CLICK ON EXPAND ===> ON THE RIGHT ===> TO SEE THE OUTPUT (terraform.tfvars code) ===>''' : | '''CLICK ON EXPAND ===> ON THE RIGHT ===> TO SEE THE OUTPUT (terraform.tfvars code) ===>''' : | ||

<div class="mw-collapsible-content">{{console|body= | <div class="mw-collapsible-content">{{console|body= | ||

vsphere_user = “administrator@vsphere. | vsphere_user = “administrator@vsphere.local" | ||

vsphere_password = “<my vCenter Server Password> | vsphere_password = “<my vCenter Server Password>" | ||

vsphere_server = | vsphere_server = “vcsa-01.home.local” | ||

vsphere_datacenter = “HOME” | vsphere_datacenter = “HOME” | ||

vsphere_datastore = “vsanDatastore” | vsphere_datastore = “vsanDatastore” | ||

vsphere_resource_pool = “Lab1” | vsphere_resource_pool = “Lab1” | ||

vsphere_network = “L1-APP-MGMT11” | vsphere_network = “L1-APP-MGMT11” | ||

vsphere_virtual_machine_template = | vsphere_virtual_machine_template = “nas-template” | ||

vsphere_virtual_machine_name = “l1- | vsphere_virtual_machine_name = “l1-nas” | ||

}}</div> | }}</div> | ||

</div> | </div> | ||

| Line 85: | Line 75: | ||

<div class="mw-collapsible-content">{{console|body= | <div class="mw-collapsible-content">{{console|body= | ||

# vsphere login account. defaults to admin account | # vsphere login account. defaults to admin account | ||

variable | variable "vsphere_user" { | ||

default = "administrator@vsphere. | default = "administrator@vsphere.local” | ||

} | } | ||

| Line 124: | Line 114: | ||

<div class="mw-collapsible-content">{{console|body= | <div class="mw-collapsible-content">{{console|body= | ||

provider “vsphere” { | provider “vsphere” { | ||

user = | user = "${var.vsphere_user}" | ||

password = "${var.vsphere_password} | password = "${var.vsphere_password}” | ||

vsphere_server = | vsphere_server = “${var.vsphere_server}” | ||

allow_unverified_ssl = true | allow_unverified_ssl = true | ||

} | } | ||

| Line 151: | Line 141: | ||

data “vsphere_virtual_machine” “template” { | data “vsphere_virtual_machine” “template” { | ||

name = “${var.vsphere_virtual_machine_template}” | name = “${var.vsphere_virtual_machine_template}” | ||

datacenter_id = “${data.vsphere_datacenter.dc.id} | datacenter_id = “${data.vsphere_datacenter.dc.id}" | ||

} | } | ||

resource | resource "vsphere_virtual_machine" "cloned_virtual_machine” { | ||

name = “${var.vsphere_virtual_machine_name}” | name = “${var.vsphere_virtual_machine_name}” | ||

wait_for_guest_net_routable = false | wait_for_guest_net_routable = false | ||

wait_for_guest_net_timeout = 0 | wait_for_guest_net_timeout = 0 | ||

resource_pool_id = “${data.vsphere_resource_pool.pool.id}” | resource_pool_id = “${data.vsphere_resource_pool.pool.id}” | ||

datastore_id = “${data.vsphere_datastore.datastore.id}” | datastore_id = “${data.vsphere_datastore.datastore.id}” | ||

num_cpus = | num_cpus = 4 | ||

memory = 8192 | memory = 8192 | ||

| Line 182: | Line 169: | ||

disk { | disk { | ||

label = “disk0” | label = “disk0” | ||

size = | size = “8” | ||

# unit_number = 0 | # unit_number = 0 | ||

} | } | ||

disk { | |||

label = “disk1” | |||

size = “500” | |||

unit_number = 1 | |||

} | |||

clone { | clone { | ||

template_uuid = “${data.vsphere_virtual_machine.template.id}” | template_uuid = “${data.vsphere_virtual_machine.template.id}” | ||

| Line 191: | Line 185: | ||

}}</div> | }}</div> | ||

</div> | </div> | ||

Validate your code: | Validate your code: | ||

{{console|body= | {{console|body= | ||

ihoogendoor-a01:#Test iwanhoogendoorn$ tfenv use 0. | ihoogendoor-a01:#Test iwanhoogendoorn$ tfenv use 0.12.24 | ||

[INFO] Switching to v0. | [INFO] Switching to v0.12.24 | ||

[INFO] Switching completed | [INFO] Switching completed | ||

ihoogendoor-a01:Test iwanhoogendoorn$ terraform validate | ihoogendoor-a01:Test iwanhoogendoorn$ terraform validate | ||

| Line 216: | Line 208: | ||

ihoogendoor-a01:Test iwanhoogendoorn$ terraform destroy | ihoogendoor-a01:Test iwanhoogendoorn$ terraform destroy | ||

}} | }} | ||

== Sources == | |||

* [https://www.youtube.com/watch?v=QeKJ2dmJTcI Source 1] | |||

* [https://www.christitus.com/setup-freenas-11/ Source 2] | |||

[[Category:Articles]] | [[Category:Articles]] | ||

Revision as of 21:19, 12 January 2024

To provide storage services inside each lab environment I have created a FreeNAS server VM that will be pr-deployed and pre-configured so that it can be cloned into different lab environments. This is nothing new, as we are also doing this with other VM's within the nested virtual lab environment like with the vCenter Server, the Control Server and the Cumulus network infrastructure VM’s.

Installation

The first step is to get the .ISO file that I will need to do a fresh install of FreeNAS, I downloaded this [1](https://www.freenas.org/download-freenas-release/ here].

I deployed a new virtual machine with the following specs/parameters:

- Name = “nas-template”

- Guest OS Family = "Other"

- Guest OS Version = "FreeBSD 11 64-bit"

- CPU = 4

- RAM = 8 GB

- HD1 = 8 GB (used for OS install)

- HD2 = 500 GB (used for storage)

During installation choose to boot from BIOS:

You can log in with the username `root` and the password you provided during the installation wizard. After the initial installation and the first FreeNAS boot a console will pop up where you can configure network settings like IP address, gateway and DNS. This is called the “Network console". If you shut down the console (like I did not by intention) you can always start it with `/etc/netcli` .

Full installation steps for FreeNAS can be found here.

Log in to the GUI after setting the IP address with `root` and the password that you provided during the initial installation.

Adding a new disk pool

In order to use storage space the first step in FreeNAS is to create a new “Disk” Pool:

When we have a disk pool we can create a share and enable a filesharing service, in my case NFS.

Create a Share and enable the NFS service:

At this point you would say that it is good to configure the new NFS Datastore directly into the vCenter Server. This is not possible yet, as we first need to configure the Datacenter, Hosts and Clusters. This is something we will do in a later script.

Now that the initial NAS is finished we can start to create the terraform script for cloning.

❯ tree ├── main.tf ├── terraform.tfvars ├── variables.tf

terraform.tfvars

CLICK ON EXPAND ===> ON THE RIGHT ===> TO SEE THE OUTPUT (terraform.tfvars code) ===> :

vsphere_user = “administrator@vsphere.local" vsphere_password = “<my vCenter Server Password>" vsphere_server = “vcsa-01.home.local” vsphere_datacenter = “HOME” vsphere_datastore = “vsanDatastore” vsphere_resource_pool = “Lab1” vsphere_network = “L1-APP-MGMT11” vsphere_virtual_machine_template = “nas-template” vsphere_virtual_machine_name = “l1-nas”

variables.tf

CLICK ON EXPAND ===> ON THE RIGHT ===> TO SEE THE OUTPUT (variables.tf code) ===> :

root # vsphere login account. defaults to admin account variable "vsphere_user" { default = "administrator@vsphere.local” } root # vsphere account password. empty by default. variable “vsphere_password” { default = “<my vCenter Server Password>” } root # vsphere server, defaults to localhost variable “vsphere_server” { default = “vcsa-01.home.local” } root # vsphere datacenter the virtual machine will be deployed to. empty by default. variable “vsphere_datacenter” {} root # vsphere resource pool the virtual machine will be deployed to. empty by default. variable “vsphere_resource_pool” {} root # vsphere datastore the virtual machine will be deployed to. empty by default. variable “vsphere_datastore” {} root # vsphere network the virtual machine will be connected to. empty by default. variable “vsphere_network” {} root # vsphere virtual machine template that the virtual machine will be cloned from. empty by default. variable “vsphere_virtual_machine_template” {} root # the name of the vsphere virtual machine that is created. empty by default. variable “vsphere_virtual_machine_name” {}

main.tf

CLICK ON EXPAND ===> ON THE RIGHT ===> TO SEE THE OUTPUT (main.tf code) ===> :

provider “vsphere” {

user = "${var.vsphere_user}"

password = "${var.vsphere_password}”

vsphere_server = “${var.vsphere_server}”

allow_unverified_ssl = true

}

data “vsphere_datacenter” “dc” {

name = “${var.vsphere_datacenter}”

}

data “vsphere_datastore” “datastore” {

name = “${var.vsphere_datastore}”

datacenter_id = “${data.vsphere_datacenter.dc.id}”

}

data “vsphere_resource_pool” “pool” {

name = “${var.vsphere_resource_pool}”

datacenter_id = “${data.vsphere_datacenter.dc.id}”

}

data “vsphere_network” “network” {

name = “${var.vsphere_network}”

datacenter_id = “${data.vsphere_datacenter.dc.id}”

}

data “vsphere_virtual_machine” “template” {

name = “${var.vsphere_virtual_machine_template}”

datacenter_id = “${data.vsphere_datacenter.dc.id}"

}

resource "vsphere_virtual_machine" "cloned_virtual_machine” {

name = “${var.vsphere_virtual_machine_name}”

wait_for_guest_net_routable = false

wait_for_guest_net_timeout = 0

resource_pool_id = “${data.vsphere_resource_pool.pool.id}”

datastore_id = “${data.vsphere_datastore.datastore.id}”

num_cpus = 4

memory = 8192

#num_cpus = “${data.vsphere_virtual_machine.template.num_cpus}”

#memory = “${data.vsphere_virtual_machine.template.memory}”

guest_id = “${data.vsphere_virtual_machine.template.guest_id}”

scsi_type = “${data.vsphere_virtual_machine.template.scsi_type}”

network_interface {

network_id = “${data.vsphere_network.network.id}”

adapter_type = “${data.vsphere_virtual_machine.template.network_interface_types[0]}”

}

disk {

label = “disk0”

size = “8”

# unit_number = 0

}

disk {

label = “disk1”

size = “500”

unit_number = 1

}

clone {

template_uuid = “${data.vsphere_virtual_machine.template.id}”

}

}Validate your code:

ihoogendoor-a01:#Test iwanhoogendoorn$ tfenv use 0.12.24 [INFO] Switching to v0.12.24 [INFO] Switching completed ihoogendoor-a01:Test iwanhoogendoorn$ terraform validate

Plan your code:

ihoogendoor-a01:Test iwanhoogendoorn$ terraform plan

Execute your code to implement the Segments:

ihoogendoor-a01:Test iwanhoogendoorn$ terraform apply

When the segments need to be removed again you can revert the implementation:

ihoogendoor-a01:Test iwanhoogendoorn$ terraform destroy